Introduction

DevOps could code down to the wire, passing on no opportunity to find and fix weaknesses before cutoff times. To smooth out the advancement cycle and further develop speed, shift left security helps engineers find and remediate weaknesses before the improvement cycle. This is a vital piece of supporting the DevOps strategy.

There are a few top-ranking online platforms in the United States to which you find relevant educational material. One credible source of getting information is Blackboard DCCCD eCampus.

As distributed computing enables the reception of DevOps, DevOps groups likewise get a unified stage for testing and sending. Yet, for DevOps groups to embrace the cloud, security must be at the very front of their considerations. A shift-passed on way to deal with security ought to begin that very second that DevOps groups start fostering the application and provisioning foundation. By utilizing APIs, engineers can incorporate security into their toolsets and empower security groups to find issues early.DevOps groups can move quickly without forfeiting security.

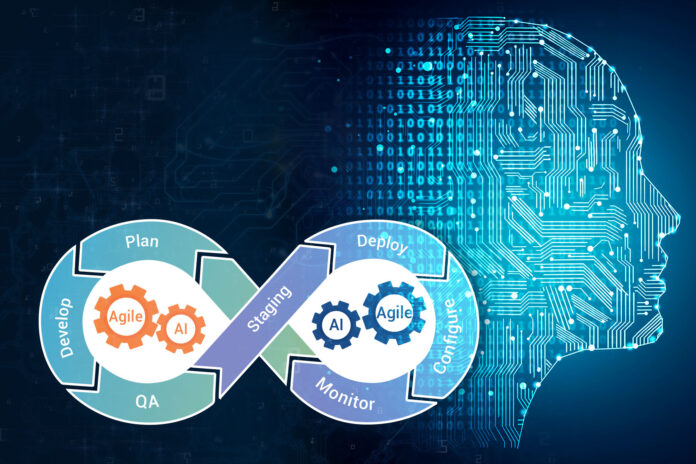

The robotization and reconciliation of safety into the CI/CD pipeline change the DevOps culture into its direct relation, DevSecOps, which expands the approach of DevOps by zeroing in on incorporating security into the cycle. DevOps has changed many ventures at the center by changing their application advancement and the executive’s approach profoundly. This profound push on a joint effort, dexterity, and client centricity is required for the progress of Data Science models.

Data Science covers part of information examination, especially that part which utilizations programming, complex numerical, and factual. it isn’t covering Data Analytics yet it will arrive at a point past the area of business analytics.DevOps has changed many undertakings in the middle by changing their application improvement and the board approach.

This significant push on composed exertion, deftness, and client centricity is expected for the advancement of Data Science models. Data Science covers part of data examination, particularly that part which uses programming, complex mathematical, and quantifiable. it isn’t thoroughly covering Data Analytics yet it will show up at a point past the area of business examination.

The Need For DevOps In Data Science

The CI/CD pipeline addresses an alluring objective for dangerous entertainers. Its criticality implies that a trade-off could fundamentally affect business and IT tasks. Baking security into the CI/CD pipeline empowers organizations to seek after their computerized drives with certainty and security. By moving security left, associations can recognize misconfigurations and other security gambles before they influence clients.

It is protected against crashes by a compose ahead log that can be replayed when the Prometheus server restarts. Compose ahead log documents are put away in the wall registry in 128MB sections. These records contain crude information that has not yet been compacted; in this manner, they are altogether bigger than standard block documents. Prometheus will hold at least three compose ahead log records. High-traffic servers might hold multiple WAL records to keep somewhere around two hours of crude information. Subsequently, it isn’t for arbitrary reasons adaptable or strong even with the drive or hub blackouts and ought to be overseen like some other single hub data set. The utilization of RAID is proposed for capacity accessibility, and previews are suggested for reinforcements. You can likewise take a stab at eliminating individual block registries, or the WAL catalog to determine the issue. Note that this implies losing roughly two hours of information for every block index. Once more, Prometheus’ neighborhood stockpiling isn’t expected to be sturdy long haul stockpiling; outside arrangements offer expanded maintenance and information strength.

They needed to sort out where it will be utilized and by whom and needed to completely figure out the full cycles and documentation, including particular, advancement, arrangement, and backing. As innovation is progressing quickly, there is an expanded prerequisite for highlights, clients, and speed and hence, the software engineers needed to tackle this issue by advancing in various explicit jobs. That is the way it came to every one of the various positions we have today. Since cloud-local has a reason to decrease go-to-showcase time and carry more productivity to organizations, DevOps smoothes out people, instruments, and frameworks, adding to the general progress of the venture. This is the thing that makes cloud-local DevOps a consistent advance towards further developed efficiency. You accomplish this through the DevOps Training in Chennai & innovations like Kubernetes and holders, which can robotize cycles and make applications more versatile. This large number of practices apply to pretty much every part of the organization. This implies changing the whole culture which includes instruments, individuals, and cycles. At the point when organizations understand that dexterous improvement requires both robotization and culture change to create quality applications quicker, DevOps turns into a need. Taking care of different cross-breed conditions or smoothing out foundation stuff can get exceptionally convoluted. That is the reason the prominence of instruments that adjust cloud-local and DevOps processes is rising quickly.DevOps, you’ll have a go at mechanizing whatever number of cycles as could be expected under the circumstances. Nonetheless, you don’t do this by endlessly adding instruments. You need to pick the right instruments and make the best blend that is ideal for your application. The quintessence of DevOps, notwithstanding, lies in the solidarity and the execution of the right practices that add to expanded efficiency and improved processes. While testing inside the execution pipelines makes things more direct and streaming, it likewise makes them restricted and deficient. Persistent checking, then again, can upgrade the whole interaction by featuring each glitch that happens even after the testing. Groups frequently consider security checking capacities something put to use inside the CI/CD work processes.

The execution of a different instrument that deals with security is a basic move for DevOps to turn and stay away from any weaknesses in the process. Adopting cloud-local DevOps ought to be a sluggish cycle with a ton of learning on the way. Expecting an organization that has been utilizing on-premise applications to promptly adjust every one of their designs and stages into solitary cloud-local engineering is inconceivable. Perhaps you can rapidly make new cloud-local applications, yet progressing existing applications will take some time. New datasets bring about preparing and development of new ML models that should be made accessible to the clients. Probably the accepted procedures of ceaseless incorporation and organization (CI/CD) are applied to the ML lifecycle of the executives. Every rendition of an ML model is bundled as a holder picture that is labeled suddenly. DevOps groups overcome any barrier between the ML preparing climate and model sending climate through modern CI/CD pipelines. When a completely prepared ML model is accessible, DevOps groups are supposed to have the model in a versatile climate. DevOps groups are utilizing holders for provisioning improvement conditions, information handling pipelines, and preparing framework and model sending conditions. Arising advancements, for example, Kube Flow and MlFlow center around empowering DevOps groups to handle the new difficulties associated with managing the ML framework. AI carries another aspect to DevOps. Alongside designers, administrators should team up with information researchers and information architects to help organizations embrace the ML worldview.

DevOps In Data Science And Its Applications In Technology

The vast majority of the cloud suppliers have committed administrations around them to have a consistent combination and administration to the end clients. A portion of the normal suppliers is Amazon Web Services, Microsoft Azure, and Google Cloud. These cloud specialist organizations additionally support AI, picture handling, GPU Computing, and high volume information examination. Information science arrangements won’t be only a piece of code to work with. For the end client to consume, the model needs to work with a front-end application as well as the backend instrument. From an information science viewpoint, we see that there are more free specialists, experts, and remote groups who are dealing with different issues and difficulties. With additional cooperative groups across the globe, it is fundamental for an association to have an organized interaction around improvement for the end-users. Apart from the above benefits turned from the cloud, we likewise have a proficient course in empowering the logging system, cost administration, building dashboards, and determining insights.

Applications that rely upon high-goal pictures, sound, and video information can be handled quickly, and building the expected engineering, plan, and execution become simpler. Applications must be conveyed quickly, according to client needs, and in a spry method for adjusting to the quickly evolving setting. Additionally, the worth of information has developed multi-crease as well. Presently, organizations need to source, tap, distill, dissect, decipher, and apply information for consumer loyalty choices and insights. A Global Market Insights report credits this development to a lofty interest to limit framework improvement life cycle, work with fast programming advancement across numerous ventures, and increment business efficiency through speedy application delivery. Or a developing tendency of undertakings toward information escalated business techniques. Justifiably, both these innovation regions have delighted in quick and significant development in the last few years. Even when the models are ready for creation, issues keep on arising in numerous conditions, conditions, and abilities holes.

That upsets the capacity for models to track down the last phase of real openness and effect. Information Science Lifecycle spins around the utilization of AI and different logical methodologies to deliver bits of knowledge and forecasts from data to secure a business endeavor objective. The total technique incorporates various advances like information cleaning, planning, demonstrating, model assessment, and so forth. Information researchers and designers utilize an assortment of dialects, libraries, and tool compartments, and improve conditions to develop AI models. Famous dialects for AI improvement, for example, Python, R, and Julia are utilized inside advancement conditions in light of Jupyter Notebooks, PyCharm, Visual Studio Code, RStudio, and Juno. These conditions should be accessible to information researchers and engineers tackling ML issues. Provisioning, designing, scaling, and dealing with these bunches are regular DevOps work. DevOps groups might need to make content to computerize the provisioning and design of the framework for an assortment of conditions. They will likewise have to robotize the end of examples while the preparation task is finished. Indeed, these assignments are very central to the fruitful execution of AI in business. In any case, there is one significant capacity that is getting well known, for example, DevOps for information science which starts the conversation on the meaning of DevOps in Data Science.

Be that as it may, why does DevOps matter, and why is the term getting well known in the cloud business? Indeed, in this article, we will talk about everything exhaustively. We will likewise discuss the meaning of DevOps in information science. Engineers work under their hierarchy of leadership, or you can say project chiefs. They generally need to acquire every one of the elements for the items straight away. For information researchers, this implies adjusting all the design and factors of the model. Presently, the opposite end is IT. Discussing the IT specialists, they ensure that every one of the organizations, firewalls, and servers are working accurately. Moreover, they additionally manage network safety. Here you may be thinking why does DevOps matter in this field? The response is very basic. There is a developing interest for somebody who matches the activity as well as engineer profile in the IT business. This demonstrates that the requirement for DevOps will increase in the approaching time. In the IT business, DevOps is named as a social methodology. Coordinating DevOps in programming advancement and information science has many advantages. We will talk about this later. Why is DevOps well known? Indeed, the explanation for the huge prevalence of DevOps is that DevOps lets the organizations create and further develop items at a quicker rate than the conventional techniques. Presently, we should discuss the significance of DevOps.t will be very confusing to tell if a specific application is useful or not. At the point when designers present a solicitation, this expands the process durations. Yet, with the assistance of DevOps, such issues can be forestalled. DevOps guarantee the most extreme degree of perfection simultaneously.

Conclusion

The essential purpose for the disappointment of the groups is modifying surrenders. With a restricted improvement cycle, DevOps advances standard code forms. For the learning purpose, Infycle Technologies makes it very easy to recognize the deficient codes. With this, the group can use their opportunity to bring down the possibilities of execution disappointment by utilizing powerful programming standards. At the point when there is a most extreme degree of confidence in a group, all the colleagues can insight and grow successfully. The group can work best to carry the items to the market quicker. DevOps makes the whole cycle straightforward, and this, thus, inspires the staff to work toward a shared objective. That is the reason there is a requirement for DevOps in each association that makes arrangements with cloud administrations.