The Tech Node 927779663 Neural Matrix presents a modular, data-driven framework blending neural-inspired processing with scalable node-based architecture. It emphasizes transparent performance through novelty metrics and energy budgeting to guide resource allocation. Sparse connectivity enables real-time learning with lower overhead and potential hardware acceleration. Deployed across robotics, data centers, and edge environments, it aims for predictable latency and scalable interoperability, yet trade-offs and future potential warrant closer examination. Opportunities and limits remain open for exploration.

What Is the Tech Node 927779663 Neural Matrix?

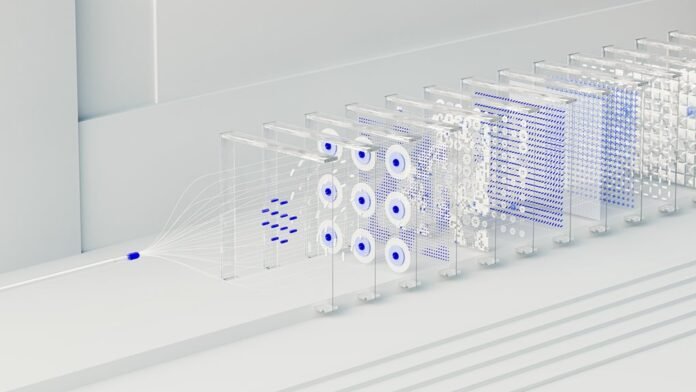

The Tech Node 927779663 Neural Matrix is a data-driven computational framework that integrates neural-inspired processing with scalable node-based architecture. It operates as a modular, transparent system analyzing performance through novelty metrics and guiding resource allocation via energy budgeting. This configuration enables adaptive experimentation, objective assessment, and freedom-oriented optimization, while maintaining rigorous data integrity and reproducible results for researchers and engineers.

How Sparse Connectivity Powers Real-Time Learning

Sparse connectivity enables efficient real-time learning by constraining information flow to essential pathways, reducing computational overhead while preserving expressive power. It yields sparse gradient updates and faster convergence, supported by empirical data showing lower latency with comparable accuracy.

A surface level analogy clarifies filtration of noise.

Hardware acceleration exploits sparsity patterns, boosting throughput without sacrificing robustness or scalability under dynamic workloads.

Deploying Neural Matrix Across Robotics, Data Centers, and Edge

Deploying Neural Matrix across robotics, data centers, and edge environments leverages its sparse connectivity to align computation with application needs. The approach enables neural interfaces to operate with real time adaptation, optimizing energy efficiency through sparse topology. Analytics show scalable deployment, modular interoperability, and predictable latency, supporting autonomous workflows while maintaining flexibility for diverse hardware ecosystems and user-driven performance goals.

Challenges, Trade-offs, and Future Potential of Neural Matrix

Are the performance benefits of Neural Matrix sustained across heterogeneous workloads and evolving hardware, or do trade-offs emerge under real-world constraints?

The assessment highlights scenario alignment as crucial for consistent gains, while energy budgeting defines practical limits.

Trade-offs surface in latency, memory traffic, and deployment complexity, yet potential persists through adaptive tiling, precision scaling, and modular architectures that align with evolving accelerator ecosystems.

Conclusion

The Tech Node 927779663 Neural Matrix offers a measured advancement in modular, data-driven processing, leveraging sparse connectivity to deliver steady real-time learning and scalable throughput. Its transparent novelty metrics and energy budgeting provide a disciplined framework for resource allocation, while cross-domain deployments illustrate adaptable robustness. Trade-offs—latency versus expressivity, simplification versus precision—appear managed rather than eliminated. Overall, the system positions itself as a prudent step toward reproducible, scenario-aligned intelligence in heterogeneous environments.